If Claude is writing code faster than you can review it, you are stacking risk instead of moving fast. The goal of claude code best practices is simple: keep the speed, but make outputs predictable, testable, and safe to ship.

This guide gives you a tight workflow, a copy-paste checklist, and the best tools and resources to make Claude Code behave like a disciplined teammate instead of a lottery machine.

Claude Code best practices in plain English

Claude Code is an agentic coding tool that can read your project, edit files, run commands, and create commits. That power is also where teams get burned. You will get the most reliable results when you treat Claude like a junior engineer with super speed: you give clear constraints, you demand a plan, you enforce tests, and you gate changes.

Here is the core idea you can reuse in any repo:

- Reduce ambiguity: If you do not define scope, Claude will.

- Force small changes: Big, multi-file rewrites are where quality collapses.

- Make correctness measurable: "Works" means "passes tests" and meets acceptance criteria.

- Assume outputs can be wrong: Especially around auth, edge cases, and security.

If you are building internal tools or trying to productize your expertise into a niche SaaS, this discipline is how you ship "in days, not months" without waking up to a broken production release. our own content library is built around the same idea: turn messy intent into structured systems you can scale, like the workflows in the AI app builder prompts guide.

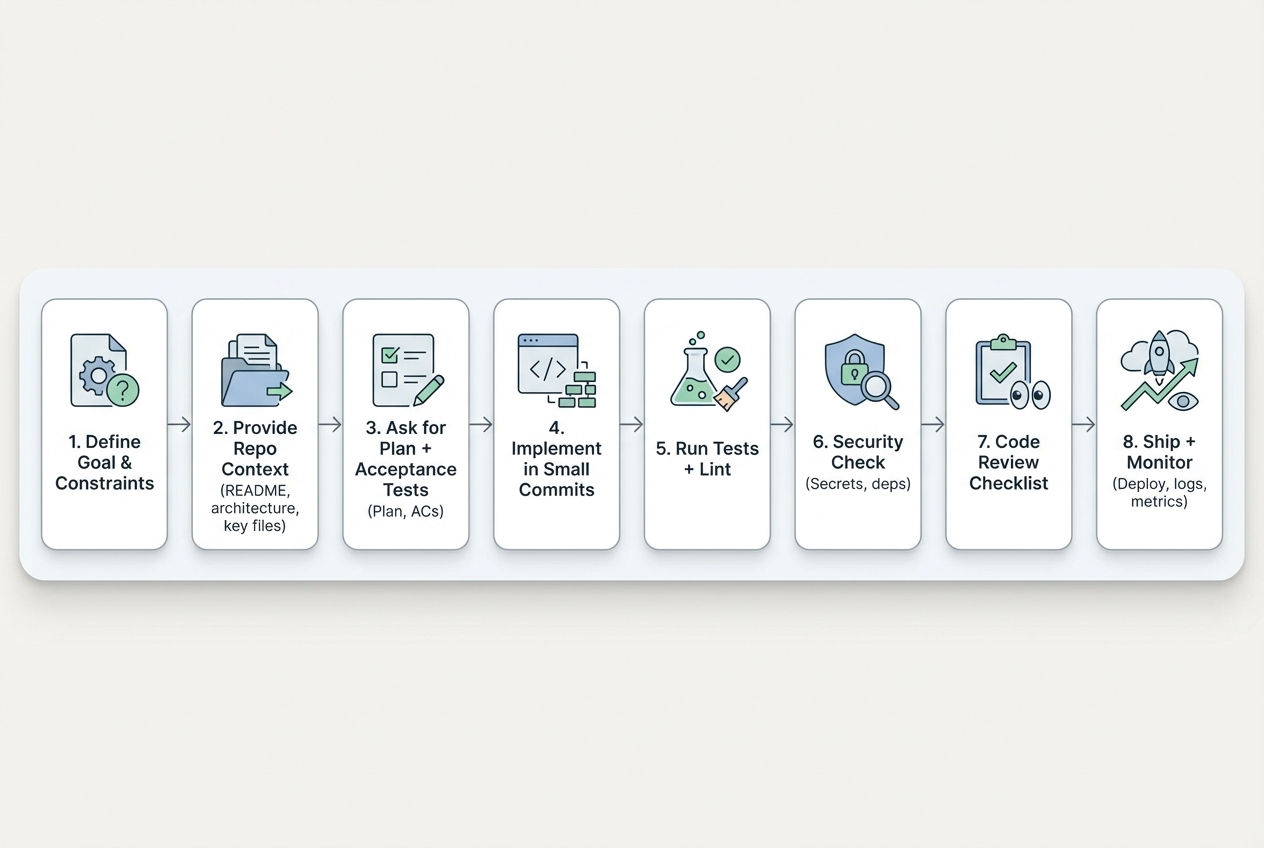

The workflow that keeps Claude outputs shippable

Use this loop for features, bug fixes, refactors, and "please clean this up" tasks.

-

Define the outcome: Give Claude a finish line it cannot improvise.

- User story: State the user story.

- Constraints: Add constraints (tech stack, performance, style, backward compatibility).

- Do-not-touch areas: Add explicit "do not touch" areas.

-

Provide bounded context: Give the minimum context that makes the task solvable.

- Target files: Point Claude to the exact files.

- Current behavior: Include current behavior and failing logs.

- Key contracts: Paste key types, schemas, and API contracts.

-

Ask for a plan before code: Make the approach reviewable before the diff exists.

- Step-by-step plan: Require a step-by-step plan.

- Acceptance criteria: Require acceptance criteria.

- Test plan: Require a test plan.

-

Implement in small commits: Keep diffs reviewable and rollback simple.

- One goal per change: One goal per change.

- Reviewable diffs: Keep diffs reviewable.

- Refactor discipline: Avoid wide refactors unless you can prove safety.

-

Run tests and linters locally: Make failures repeatable before you push.

- Minimum bar: Treat "green CI" as the minimum bar.

- Actionable failures: Make failures actionable and repeatable.

-

Add security guardrails: Assume the agent will eventually try something unsafe.

- Secret hygiene: Block secrets from entering prompts.

- Manual security review: Review auth and access control changes manually.

- Supply chain checks: Check dependency and supply chain risk.

-

Review with a checklist: Review speed improves when the standard is fixed.

- Quality gates: Correctness, edge cases, performance, security, observability.

-

Ship and monitor: Treat release as part of the workflow, not an afterthought.

- Release notes: Capture what changed and why.

- Monitoring: Add basic monitoring and error reporting.

The real challenge is turning chaos into a system rather than just writing code. If your real pain is that requirements are fuzzy and outputs drift, consider skipping the messy middle. our Packets workflow is designed to turn a rough idea into structured build-ready specs, then generate the app, then bring in humans when needed. The walkthrough in how an AI app builder works mirrors this same loop.

Best tools and resources for Claude Code best practices

These are the most useful building blocks if you want Claude-assisted coding to be consistent across projects. Each item includes a screenshot so you can quickly recognize the right page and tool.

1) QuantumByte - for turning "Claude output" into a real product

If you are serious about shipping a sellable app, the best practice is not "ask for better code." It is "make the build spec unambiguous." That is where QuantumByte wins.

Why it is #1: it reduces the most expensive Claude failure mode, which is vague intent turning into sprawling rework. Quantum Byte pushes you to define users, workflows, and rules up front, then uses AI to build from that structure. And if you hit the edge of what AI can do today, our development team can finish the hard parts.

Good fit when:

- You want leverage: You are productizing a niche workflow into a Micro SaaS, like the ideas in micro SaaS niches.

- You are building internal tooling: You need a durable system, not a fragile demo.

Explore the pricing plans, to get started.

2) Claude Code documentation

Start here if you are setting expectations with yourself or your team. The Claude Code overview clearly frames Claude Code as an agent that can edit files, run commands, and work in CI. The takeaway here is to use Claude for actions, not just answers: If you want reproducible work, prefer "run X, change Y, commit with message Z" over "tell me what you would do."

3) Model Context Protocol for safer tool and data access

MCP give you control. When you let an agent reach into external systems, you want structured boundaries, not ad hoc scripts.

The Model Context Protocol (MCP) is positioned as an open standard for connecting AI applications to external systems. In practice, it gives you a cleaner path to "Claude can access X tool" without turning your environment into a pile of one-off integrations.

- Takeaway: Prefer structured tool calls and make agent capabilities explicit and auditable.

4) Continue for enforcing rules on pull requests

Claude can write code. Your real challenge is getting consistent standards across every pull request.

Continue is useful when:

- You want guardrails at PR time: The right place to enforce conventions is before merge.

- You need auditable review: Agent logs and structured review steps make results easier to trust.

5) pre-commit for making "quality checks" automatic

If Claude is committing changes, you want a safety net that runs every time, for every developer.

pre-commit supports a simple but powerful best practice:

- Automate the boring correctness checks: Formatters, linters, secret scanners, and basic tests should run before code even reaches code review.

6) GitHub Actions for CI that Claude cannot "argue with"

Your definition of done must be executable. CI is the enforcement mechanism.

GitHub Actions helps you lock in best practices like:

- Make tests non-optional: If the workflow is required, "it passes locally" stops being a debate.

- Keep releases repeatable: Build, test, and deploy in the same way every time.

A copy-paste checklist for Claude Code sessions

Use this as the top of your prompt, or paste it into your repo as CLAUDE_RULES.md.

| Category | Your rule (copy/paste) | Why it matters |

|---|---|---|

| Goal | Define the outcome in one sentence and list 3 acceptance criteria. | Claude needs a finish line you can verify. |

| Scope | List files to edit and files not to touch. | Prevents accidental rewrites. |

| Plan first | Ask for a step-by-step plan before changes. | Catches bad approaches early. |

| Small diffs | Limit each change to one feature or one fix. | Makes review possible. |

| Tests | Require new or updated tests for behavior changes. | Protects you from regressions. |

| Commands | Ask Claude to list commands it will run before it runs them. | Prevents destructive actions. |

| Security | Never paste secrets. Treat auth and access control changes as manual-review-required. | Agent mistakes here are expensive. |

| Output format | Request a short summary, a file-by-file diff outline, and next steps. | Keeps you oriented and speeds review. |

If you want a structured way to turn a rough idea into specs that an AI can build reliably, borrow the prompt structure from AI app builder prompts. The same "clarity first" approach works whether you are prompting Claude Code or building an app end-to-end.

Security best practices when an agent can run commands

Agentic coding changes the risk profile. You are not only reviewing code. You are managing autonomy.

Anchor your security review in two places:

- LLM-specific risks: The OWASP Top 10 for Large Language Model Applications highlights failure modes like prompt injection, insecure output handling, and excessive agency.

- Software development fundamentals: NIST’s Secure Software Development Framework (SSDF) lays out practices you can integrate into any software development life cycle.

Practical guardrails that pay off immediately:

- No secrets in prompts: Treat prompts like logs. Assume they can leak.

- Lock down tool permissions: Give Claude only the tools it needs, not everything it could use.

- Human review for auth: Anything involving access control, authentication, and authorization should be manually reviewed.

- Dependency discipline: New packages should be justified. Supply chain risk is real.

If you are building for regulated teams or multi-department rollouts, it can be simpler to treat the agent as a prototyping layer, then move to an operational platform with governance. That is where QuantumByte Enterprise fits better than a pile of scripts.

Common ways Claude-assisted coding goes wrong and how to fix it

These are patterns you can spot quickly.

- Vague request, vague output: Fix it by rewriting the task into acceptance criteria and concrete constraints.

- Large refactor "to make it cleaner": Fix it by enforcing small diffs and asking for a plan with rollback steps.

- Hidden breaking changes: Fix it by requiring tests first, or at least a reproduction script.

- Confident but incorrect behavior: Fix it by forcing Claude to cite where in the codebase the behavior is implemented, then verify.

- PRs that are impossible to review: Fix it by splitting work into a sequence of PRs, each with one goal.

If you are building SaaS, the failure mode is even more painful because architecture mistakes compound. Use a reference like the multi-tenant architecture guide to sanity-check decisions before Claude implements them.

Where to go from here

You now have a practical definition of claude code best practices, a workflow you can repeat in any repository, and a short list of tools that make quality enforceable.

If your next step is to turn a Claude-assisted prototype into something you can actually sell or run internally, do it the disciplined way:

- Start with a structured spec: Define users, workflows, constraints, and edge cases before you let the agent run.

- Build in small increments: Keep each change small enough to review in minutes, not hours.

- Enforce tests and CI: Treat automated checks as your non-negotiable definition of done.

- Add security guardrails: Limit permissions, avoid secrets in prompts, and manually review auth.

When you want the fastest path from idea to production without getting trapped in prompt churn, start a Packet and let the system guide the structure: QuantumByte Packets.

For more playbooks on productizing and automation, browse our articles library and pull ideas from the business process automation guide.

Frequently Asked Questions

What are the most important Claude Code best practices to start with?

Start with three: define acceptance criteria, force a plan before code, and require tests. Those three practices reduce bad scope, bad approach, and silent regressions.

Should I let Claude Code commit directly to my repository?

Yes, but only with guardrails. Use branches, require pull requests, and enforce CI checks. Pair it with pre-commit hooks so basic quality checks run automatically before commits.

How much context should I give Claude Code?

Give bounded context. Provide the exact files, current behavior, constraints, and expected behavior. Avoid dumping the entire repo unless the task truly needs it.

Can Claude Code handle architecture decisions for a SaaS product?

It can propose options, but you should decide. Architecture mistakes compound quickly, especially in multi-tenant systems. Use Claude to explore tradeoffs, then anchor decisions in a clear architecture plan before implementation.

What is the fastest way to turn an AI-built prototype into a real app?

Use a structured specification workflow, then build incrementally with tests and CI. If you want that structure baked into the build process, QuantumByte Packets can take you from idea to build-ready documentation and a working app, with a path to human engineering support when needed.